Професія ДевОпс стає дедалі популярнішою та затребуванішою у світі інформаційних технологій. Цікаво, що девопс не зовсім програміст оскільки його роль включає не лише написання коду, але й вирішення багатьох інших завдань, що робить цю професію надзвичайно цікавою та різноманітною.

Що таке ДевОпс?

ДевОпс – це методологія, яка об'єднує розробку програмного забезпечення (Development) та його експлуатацію (Operations). Метою ДевОпс є покращення співпраці між командами розробників та системних адміністраторів, а також автоматизація та оптимізація всіх процесів, що пов'язані з життєвим циклом програмного забезпечення. Це дозволяє значно скоротити час на випуск нових версій програм і покращити якість продукту.

Основні обов'язки ДевОпс інженера

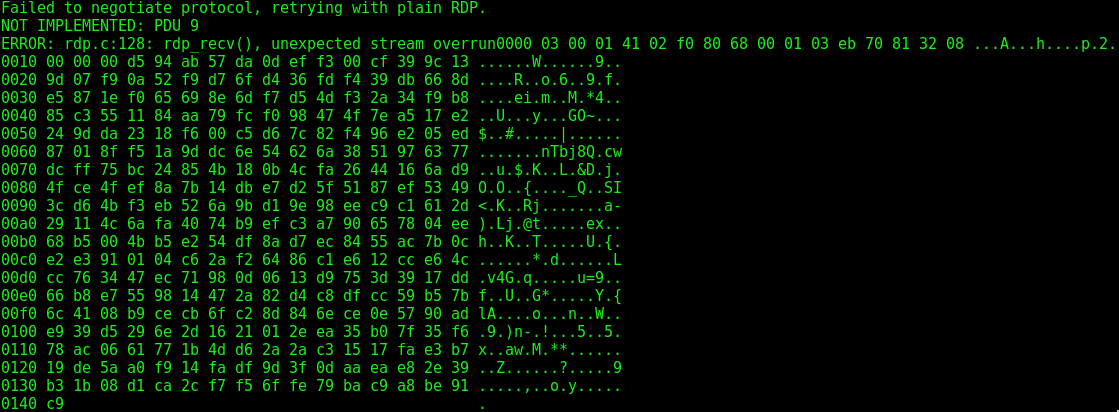

ДевОпс інженери відповідають за кілька ключових завдань. Вони займаються автоматизацією процесів, створюючи та підтримуючи скрипти і інструменти для автоматизації різних етапів розробки, тестування та розгортання програмного забезпечення. Моніторинг і логування також є важливою частиною їхньої роботи, що включає встановлення та налаштування систем моніторингу для відслідковування роботи додатків та інфраструктури в режимі реального часу. Управління конфігурацією здійснюється за допомогою інструментів, таких як Ansible, Puppet або Chef, для забезпечення стабільної роботи системи. Впровадження практик безперервної інтеграції та безперервного розгортання (Continuous Integration/Continuous Deployment) дозволяє швидко виправляти помилки та впроваджувати нові функції. Окрім цього, ДевОпс інженери відповідають за безпеку програмного забезпечення та інфраструктури, включаючи контроль доступу, шифрування даних та виявлення вразливостей.

Переваги та виклики професії

Професія ДевОпс має низку переваг, що робить її привабливою для багатьох спеціалістів. По-перше, різноманітність завдань: ДевОпс інженери працюють з різними аспектами розробки та експлуатації програмного забезпечення, що робить їхню роботу цікавою та різнобічною. По-друге, високий попит: з кожним роком все більше компаній впроваджують практики ДевОпс, що створює великий попит на кваліфікованих фахівців у цій галузі. По-третє, можливості для кар'єрного росту: спеціалісти можуть розвиватися в різних напрямках, таких як архітектура систем, безпека або управління командами. Нарешті, конкурентна заробітна плата: завдяки високому попиту та складності завдань, ДевОпс інженери зазвичай отримують високу заробітну плату.

Одним з найбільших переваг роботи у сфері ДевОпс є можливість постійного професійного розвитку. Сучасний технологічний світ швидко змінюється, і ДевОпс інженери постійно знаходяться на передовій цих змін. Вони мають можливість працювати з найновішими технологіями та інструментами, що не лише покращує їхні навички, але й відкриває нові горизонти для кар'єрного зростання.

Однак, професія має і свої виклики. Постійне навчання: технології швидко розвиваються, тому ДевОпс інженери повинні постійно вдосконалювати свої навички та знання. Високий рівень відповідальності: від ДевОпс інженерів залежить стабільна робота систем, тому вони мають бути готові швидко реагувати на непередбачувані ситуації. Міждисциплінарні знання: професія вимагає знань у багатьох областях, від програмування до системного адміністрування та безпеки.

Розвиток даної сфери

Професія ДевОпс є надзвичайно цікавою та перспективною для тих, хто прагне поєднати навички розробки та експлуатації програмного забезпечення. Вона пропонує різноманітні завдання, високий попит на ринку праці, можливості для кар'єрного росту та конкурентну заробітну плату. Однак, вона також вимагає постійного навчання та готовності до високого рівня відповідальності. ДевОпс – це шлях до побудови ефективних та надійних програмних рішень, що є ключовим для успіху будь-якої сучасної компанії.

Розвиток професії ДевОпс також пов'язаний з широким використанням хмарних технологій, що додає ще один рівень складності та цікавості до роботи. Хмарні платформи, такі як AWS, Google Cloud та Microsoft Azure, стали невід'ємною частиною сучасних ІТ-інфраструктур. ДевОпс інженери часто взаємодіють з цими платформами, налаштовуючи та підтримуючи складні розподілені системи. Це вимагає глибокого розуміння принципів роботи хмарних сервісів та вміння ефективно використовувати їх можливості.

Загалом, професія ДевОпс надає унікальну можливість працювати на стику розробки та експлуатації, поєднуючи технічні знання з управлінськими навичками. Вона відкриває двері до багатьох можливостей та викликів, що робить її привабливою для тих, хто прагне постійного розвитку та вдосконалення у світі інформаційних технологій.